Translating scientific evidence into effective policies for health and technology requires care

Acknowledgments

We are particularly thankful to Yeeun Archer Lee, Felix Cheung, and Lara Aknin for their review of this chapter. We thank the WHR team, and the other authors in this edition for their helpful comments and discussions. We also thank Dr Victoria Nash for her expertise and discussion early on in this work.

Key insights

Professional science organisations that have examined social media and adolescent mental health have reached different conclusions and policy recommendations despite examining similar research. Given their substantial influence on policy and public understanding, it is important to investigate their evidence synthesis practices.

Our analysis of three high-profile reports on social media and adolescent mental health finds that they cited broadly similar types of research, yet showed little overlap (<1%) in their sources.

We also found considerable variation in how the reports synthesise, communicate, and simplify evidence, including differences in citation accuracy, contextual detail, limitation acknowledgement, and conclusion strength.

The stakes of getting these syntheses right are substantial. Poor synthesis quality risks developing policies which may be ineffective or cause unintended harm, and may contribute to the erosion of public trust in scientific institutions more broadly.

When communicating the state of a complex scientific field, it is crucial to be honest about shortcomings and uncertainties, and to maximise fidelity to the underlying research. As scientists committed to rigorous, transparent, and replicable approaches to understanding complex phenomena, we have a responsibility to consistently uphold standards that justify claims to scientific authority and to identify opportunities for improving practices within our community.

Introduction

The question of how social media engagement relates to adolescent mental health attracts intense public concern and demand for scientific guidance.[1] Indeed, with adolescents spending substantial time on social media platforms and rising adolescent mental health concerns, policymakers, parents, educators, and practitioners are increasingly seeking evidence-based guidance on appropriate responses.[2] The stakes are high: policymaking in this domain directly affects vulnerable and young populations, involves significant resources, and shapes how technology companies design platforms used by billions of young people worldwide. This is hard to get right because balancing the potential benefits and unintended consequences of new policies requires rigorous synthesis and communication of the available research evidence.

Indeed, research indicates that reviewing and translating research into policy guidance is a challenging process.[3] Individual studies, however well-designed, cannot alone constitute what we know about a social phenomenon. Scientific understanding emerges from integrating findings across multiple investigations, navigating often contradictory results, weighing methodological differences, and accurately characterising what is known and what remains uncertain.[4] This synthesis work is fundamental to how research contributes to evidence-based policy. In this realm, professional science organisations, such as the American Psychological Association and the National Academies of Sciences, serve as crucial intermediaries in this process by translating complex research into accessible reports with policy recommendations designed to be read by diverse audiences.[5]

Because organisations represent distinct academic disciplines, it is possible they will produce and synthesise studies investigating the same question, but distinct methodological and disciplinary emphases means they might reach markedly different conclusions. For instance, while the US Surgeon General’s Office advocates to “pursue policies that further limit access to social media for all children,”[6] the National Academies found that current evidence does not support population-level causal conclusions and noted that “the committee sympathizes with some parents’ desire for authoritative prescriptions on teenagers’ social media use but [are] also mindful of overreaching the data”,[7] cautioning against strict age limits. What should readers and policymakers make of this difference?

When authoritative bodies reach different conclusions from scientific evidence, this raises questions about the practices governing evidence synthesis in these settings. Despite the substantial influence these reports wield,[8] the processes by which organisations synthesise and communicate evidence rarely receive systematic evaluation. And yet, these synthesis practices powerfully shape both policy development[9] and public understanding. Practitioners use them to stay current with rapidly evolving research fields,[10] policymakers draw on them to inform legislative and regulatory deliberation,[11] and the general public often encounters them as accessible explanations of “what the science shows” on complex questions.[12] Given this broad influence, the quality of evidence synthesis in these documents matters considerably for downstream policy development and public understanding.

The stakes of getting synthesis reports right are substantial. Poor evidence translation risks policy ineffectiveness when interventions are poorly calibrated to what research actually demonstrates,[13] potential for unintended harms when policies proceed on overstated evidence,[14] and risk the erosion of public trust in scientific institutions more broadly.[15] In a domain where scientific understanding remains genuinely uncertain, intellectual honesty about what we do and do not know with confidence serves evidence-based policy far better than premature certainty constructed through selective emphasis or strong rhetoric.

When authoritative bodies reach different conclusions from scientific evidence, this raises questions about the practices governing evidence synthesis in these settings.

In this chapter, we take a step back from examining what makes empirical research good[16] and we focus instead on how empirical research is translated into influential policy recommendations. More specifically, we investigated how professional science organisations synthesise research into clear policy guidance by analysing three high-profile US-based reports published between 2023 and 2024: The National Academies of Sciences, Engineering, and Medicine (NASEM),[17] the American Psychological Association (APA),[18] and the US Surgeon General’s Office (OSG).[19]

We focus on three US reports for several reasons. First, these documents are highly prominent and frequently referenced in policy debates internationally, not just domestically — reflecting the intensity of US attention to social media and adolescent mental health during 2023–2024. Second, their production within an 18-month window means the organisations were theoretically drawing from a largely overlapping literature base, enabling comparison of synthesis practices while controlling for both national policy context and evidence availability. This temporal proximity is particularly important given how rapidly the social media effects literature evolves.

We acknowledge that similar evidence syntheses have been produced in other regions, including reports from the WHO,[20] OECD,[21] and various national governments.[22] A comprehensive cross-national comparison was beyond our scope, but we encourage readers to consider how the evaluative framework applied here might illuminate evidence translation practices in these diverse global contexts.

We classified the peer-reviewed research on social media and mental health cited across the reports by methodological characteristics, study design features, and thematic content to determine how organisations identified evidence bases. We also conducted a qualitative analysis of how organisations synthesised and communicated their selected evidence, examining citation accuracy, evidence integration practices, acknowledgment of limitations and contradictory findings, and rhetorical construction of conclusions. This mixed-methods approach enabled us to distinguish between differences stemming from evidence selection versus differences in synthesis and communication practices. In this chapter, we present our analysis, discuss what our findings reveal about evidence synthesis practices, consider implications for evidence-based policy in contested domains, and offer recommendations for improving synthesis quality based on our observations.

We retain confidence that scientific research can meaningfully inform policy deliberation on complex social questions, like the relationship between social media and adolescent wellbeing. However, realising this potential requires greater attention to the standards and practices governing how scientific research is synthesised and translated for policy guidance.

Background

The challenge of evidence-based policy in the social sciences

The evidence-based policy (EBP) movement argues that systematic use of research evidence can improve policy effectiveness, reduce unintended consequences, and promote democratic accountability by providing more transparency in the policy decision process.[23] This evidence-based policy movement emerged from parallel developments in evidence-based medicine and the broader “what works” and “modernising government” agendas in social policy in the early 2000s.[24] While EBP has seen success in medicine, the transition between scientific research and policy decisions in the social sciences is rarely straightforward.[25] Social interventions often involve complex relationships difficult to isolate experimentally, ethical constraints limiting experimental manipulation, and contextual factors affecting generalisability.[26] Early EBP frameworks emphasised selecting high-quality evidence through hierarchies privileging randomised trials and systematic reviews,[27] but scholars increasingly recognise that directly applying medical approaches fails to account for social complexity.[28]

Furthermore, even with clear causal evidence, social policies are guided by values, societal goals, and political factors beyond empirical findings alone.[29] Parkhurst makes an important distinction between selecting “good evidence” and ensuring “good governance of evidence” which recognises that high-quality evidence is necessary but insufficient without faithful stewardship within complex decision-making processes.[30] Contemporary scholarship has moved beyond simple linear models to recognise evidence-based policy as fundamentally political, with research representing one input among many competing considerations.[31] IJzerman and colleagues propose “evidence-readiness levels” for the social sciences considering replication status, theoretical grounding, and policy applicability beyond methodological rigour alone.[32] While such frameworks represent crucial advances in evidence quality assessment, they should be coupled with increased attention to how evidence is synthesised and communicated for policy audiences.

The current state of social media and adolescent mental health science

This domain presents particularly acute translation challenges: (a) methodologically limited evidence, (b) high public stakes, (c) intense political pressure for guidance, and (d) recent proliferation of competing overlapping professional statements addressing similar questions.

Despite demand for definitive guidance, experts in adolescent mental health have raised significant concerns about methodological limitations in the evidence base that constrain the ability to establish causal relationships or generalise findings to broader policy-relevant contexts.[33] One fundamental challenge is the difficulty in defining, testing, and demonstrating convincing evidence of causal relationships. Much evidence relies on correlational designs, with limited longitudinal research examining effects over time.[34] Additionally, it is incredibly challenging to conduct true experimental work in this domain given the near ubiquity of social media in adolescent lives. Even experimental studies employing social media “detox” strategies face significant limitations, as participants remain embedded in social environments where social media presence is pervasive.[35]

Conceptually, screen time research faces challenges: time is finite, so increased screen time necessarily entails decreased time spent on other activities. Displacement theory highlights that even when studies demonstrate associations between screen time and poorer outcomes, it remains unclear whether effects stem from the presence of screen time itself or from the absence of displaced activities — sleep, exercise, socialising — or some combination.[36] Most research measures only screen time without capturing broader time-use patterns, limiting our ability to identify mechanisms.

Experts in adolescent mental health have raised significant concerns about methodological limitations in the evidence base that constrain the ability to establish causal relationships.

Correlational studies suffer from additional confounding factors, including generational differences (in both social media usage and in mental health outcomes) which make it difficult to isolate the specific contribution of social media use from other contemporary influences on mental health outcomes such as climate anxiety,[37] economic factors,[38] or increased mental health awareness and resultant clinical diagnoses.[39] Additional methodological challenges include self-report measurement limitations concerning both time spent on social media platforms and mental health indicators, which compromise the ability to validate findings or conduct meaningful comparisons across studies.[40] The literature consistently indicates that users, particularly adolescents, are poor estimators of their true screen time use.[41] Earlier research treated social media as homogeneous, focusing primarily on aggregate device screen time. Following critiques,[42] recent work adopts more sophisticated approaches; disaggregating platforms and considering specific features.

Longitudinal studies generally report small or mixed effects, with meta-analyses highlighting limited practical significance despite statistical significance.[43] Considering publication bias favouring significant findings, some experts argue effects are likely very small at population levels.[44] The research base also suffers from limited sample diversity, predominantly featuring educated, affluent adolescents despite supporting nationwide frameworks,[45] with substantial portions conducted with adult rather than adolescent samples.[46]

These limitations exist within the broader context of psychology’s “replication crisis” and subsequent reforms.[47] The scientific community has increasingly emphasised improving research production through open science practices including pre-registration of study designs, data and code sharing, comprehensive conflict of interest reporting, and systematic replication efforts.[48] Despite the substantial attention on improving research production, less systematic scrutiny addresses how research synthesis might also be improved.

Professional science organisations and evidence synthesis

Professional science organisations occupy a distinctive position in this landscape.[49] Unlike advocacy groups, commercial interests, or individual commentators, these organisations explicitly claim scientific authority for their conclusions, positioning themselves as representing “what the research shows” or articulating “scientific consensus.” These organisations are frequently called on to provide authoritative scientific guidance on topics including environmental and climate change research, public health issues such as COVID-19, and education research.[50] Such reports are influential in the policymaking process and also contribute to broader public understanding of important social topics.[51] When organisations with similar mandates reach different policy conclusions, it raises questions about the quality of synthesis processes.

Standards for evidence synthesis vary considerably across contexts. Systematic reviews and meta-analyses in scientific publishing follow established protocols including PRISMA and Cochrane guidelines specifying transparent search strategies, explicit inclusion criteria, and structured quality assessment.[52] However, policy-facing evidence syntheses take many forms, and operate without industry-wide standardised methodological frameworks. In some domains, organisations have worked to establish their own frameworks to promote clear and accurate syntheses of evidence.[53]

When organisations with similar mandates reach different policy conclusions, it raises questions about the quality of synthesis processes.

Few studies have examined evidence use in the social media and adolescent mental health domain specifically. Richards and colleagues[54] analysed evidence cited in US pre-trial filings against social media companies, revealing selective referencing including reliance on outdated research, limited population samples, and under-specified health outcomes. Their descriptive mapping approach quantified evidence characteristics related to scientific rigour, including methodology, thematic appropriateness, and population specificity. Examining evidence communication across policy documents, Elson and colleagues[55] analysed professional organisation policies on a wide range of media effects produced before 2018, finding systematic translation issues including overstatement of causal claims, selective citation, and inadequate representation of methodological limitations. Their work established approaches for evaluating translation fidelity, though it did not examine how individual evidence pieces are represented.

The present study

This chapter presents a systematic analysis of three major policy documents addressing social media and adolescent mental health, issued contemporaneously between 2023 and 2024: the American Psychological Association (APA), the National Academies of Sciences, Engineering, and Medicine (NASEM), and the US Surgeon General’s Office (OSG). These organisations are well-resourced, scientifically sophisticated actors with explicit mandates to provide authoritative evidence synthesis for policy purposes, making their work especially informative for understanding how evidence syntheses are conducted in practice.

However, it is important to acknowledge that these organisations differ in institutional character and stated aims. Their reports also varied in scope: the OSG advisory aimed to “call attention to growing concerns” and provide urgent, actionable recommendations; the APA advisories sought to “summarise psychological science for stakeholders”; and NASEM explicitly aimed to “comprehensively examine current research” through systematic review, which partly explains its substantially greater length. All three focused broadly on adolescent health outcomes in relation to social media, encompassing both clinical mental health indicators and broader wellbeing measures, though the OSG report focuses more heavily on mental health outcomes more specifically. Table 4.1 outlines some key differences in the organisation types, stated goals, and policy conclusions of these three reports.

We employ a mixed-methods approach building on prior frameworks for critically appraising policy statement quality. Firstly, we characterise what evidence the reports cite. Our quantitative analysis adapts Richards et al.'s citation mapping methodology[56] to characterise the evidence base underlying each report, systematically coding all 617 unique academic sources for methodological characteristics, study design features, and thematic content. This enables comparison of whether organisations drew upon fundamentally different evidence or selected from similar research pools. Secondly, our qualitative analysis extends upon Elson and colleagues’ framework[57] identifying common problems in how evidence is communicated to examine how organisations synthesised and communicated selected evidence, including citation accuracy, evidence integration practices, and rhetorical construction of conclusions.

Table 4.1 presents the policy documents analysed, highlighting key organisational differences and divergent policy positions. Despite all three organisations examining scientific understanding of social media and mental health during the same period, they reached notably different conclusions with correspondingly different policy recommendations ranging from urgent calls for population-level age restrictions to cautions against interventions that outpace empirical support. Please refer to Online Appendix 4A for a detailed comparison of the conclusions and policy positions of the three reports.

| Organisation | Org type | Report title | Date | Length | Stated goals | Key conclusions and recommendations |

|---|---|---|---|---|---|---|

| APA | Professional scientific association | Health advisory on social media use in adolescents | May 2023 | 6pg | Science-informed recommendations for stakeholders | Concludes the use of social media is not inherently beneficial or harmful to young people. Recommends industry standards, parental monitoring, and platform design changes to prioritise youth safety. |

| Potential risks of content, features, and functions | Apr 2024 | 11pg | Elaborate on science relevant to policy solutions | Focuses on specific platform features requiring modification; emphasises design-level interventions. | ||

| NASEM | Congressionally chartered advisory body | Social media and adolescent health | Dec 2024 | 287pg | Comprehensive systematic examination of current research | Finds the literature did not support the conclusion that social media causes changes in adolescent health at the population level. Concludes social media can both harm and improve adolescent health. Cautions against population-level interventions; emphasises individual differences and potential benefits alongside risks. |

| OSG | Federal government office | Social media and youth mental health | May 2023 | 25pg | Call urgent attention; provide actionable recommendations | Advocates pursuing policies to further limit access including strengthening age minimums. Strengthen protections to ensure greater safety for children interacting on social media platforms. Characterises there being insufficient evidence to conclude platforms are sufficiently safe for youth. |

What types of evidence are included?

Methods

This study was exploratory rather than confirmatory, aimed at characterising patterns in evidence use across policy documents rather than testing pre-specified hypotheses. Accordingly, it was not pre-registered. We systematically analysed all citations from the three policy documents, extracting 617 unique peer-reviewed scientific articles from 1,063 total citations, to examine whether organisations’ different policy conclusions reflected systematic differences in evidence selection patterns. Given our focus on social media effects research in particular, we only retained articles whose primary focus was on social media effects (n = 355). This criterion excluded articles written primarily about non-media-related neurological development, sleep, media literacy, and education technology. We defined social media very broadly, deferring to whether each article positioned itself as investigating social media. This means articles with varying definitions, some encompassing social networking, smartphone use, or general screen time, were included, whereas articles focusing only on video games were excluded. We acknowledge the difficulty of drawing a line in the sand here. These criteria were constructed to enable our analysis to focus as far as possible on research that underlies much of the debate surrounding social media’s effects, which has historically drawn on the broader literature on screen time and smartphone use.

Following Richards et al.'s[58] framework for evaluating social media evidence use in policy statements, we categorised each cited article along three dimensions: study methodology, thematic focus, and sample characteristics.[59] This mapping enabled systematic comparison of what types of social media research each organisation drew upon to support policy recommendations. We also assessed whether each study’s methodology could plausibly support causal inferences,[60] given the centrality of causal claims in policy debates about social media effects.

Given the large citation volume, we employed an AI-assisted classification system with validation protocols to promote reliability.[61] The system processed article abstracts using structured prompts, with explicit instructions to respond with “inconclusive” when information was unclear or required inference beyond explicit abstract content. We implemented formal validation testing to ensure classification accuracy before proceeding with full analysis. Due to resource constraints, articles classified as “inconclusive” remained coded as such rather than conducting full-text reviews. Full reporting on inconclusive classifications appears in the results.

Chi-square tests of independence compared categorical distributions across organisations,[62] with appropriate corrections for multiple testing.[63] Given substantial sample size differences between reports, we conducted sensitivity analyses to assess whether findings remained robust to these imbalances. Statistical significance was assessed using both raw and corrected p-values at conventional ɑ = 0.05, with effect sizes reported to distinguish statistical from practical significance. More methodological detail is available in Online Appendix 4B.

Results

What types of evidence did organisations cite?

We analysed 1,063 total citations across the three reports to understand what types of sources these drew upon. Reports showed small but significantly different patterns in their sources,[64] though these differences may reflect varying approaches to supplementing core academic evidence rather than fundamental distinctions in evidential foundations.

The APA reports relied most heavily on peer-reviewed research (92%, k = 77), while the OSG and NASEM reports incorporated more diverse source types, with journal articles comprising 62% (k = 64) and 60% (k = 527) of their citations, respectively. However, these proportional differences mask important absolute numbers: the NASEM report’s 60% still represented 527 peer-reviewed studies, nearly seven times more academic research than the APA reports and over eight times more than the OSG report. The OSG and NASEM reports’ incorporation of diverse sources should be understood as expanding rather than substituting for scientific research. Table 4.2 shows the complete distribution of citation types.

| Item Type | APA n (%) | NAS n (%) | OSG n (%) | Total n (%) |

|---|---|---|---|---|

| Journal articles | 77 (91.7) | 527 (60.2) | 64 (62.1) | 668 (62.8) |

| Reports | 2 (2.4) | 86 (9.8) | 16 (15.5) | 104 (9.8) |

| Documents | 2 (2.4) | 83 (9.5) | 14 (13.6) | 99 (9.3) |

| News articlesa | 0 (0.0) | 78 (8.9) | 4 (3.9) | 82 (7.7) |

| Booksb | 2 (2.4) | 45 (5.1) | 3 (2.9) | 50 (4.7) |

| Other academic publicationsc | 0 (0.0) | 40 (4.6) | 0 (0.0) | 40 (3.8) |

| Other online mediad | 1 (1.2) | 17 (1.9) | 2 (1.9) | 20 (1.9) |

| Total | 84 (100.0) | 876 (100.0) | 103 (100.0) | 1,063 (100.0) |

| a Includes online newspaper or magazine articles. b Includes books and book sections. c Includes conference papers, preprints, and theses. d Includes blog posts, miscellaneous webpages, and video recordings. | ||||

The OSG report showed greater proportional reliance on professional reports (15.5%) and published documents (13.6%), while the NASEM report uniquely incorporated news articles (8.9%) and other academic publications including preprints and conference papers (4.6%). Notably, 20% of the APA reports’ citations referenced professional reports from organisations including itself, the AAP, and similar bodies.

These differences in source type distribution, while statistically significant, primarily reflect organisational approaches to incorporating supplementary materials alongside substantial cores of peer-reviewed literature. The characteristics of the academic literature selected — which formed the majority of citations across all organisations — showed considerably more convergence, as examined in the subsequent analysis.

How did citations overlap across organisations?

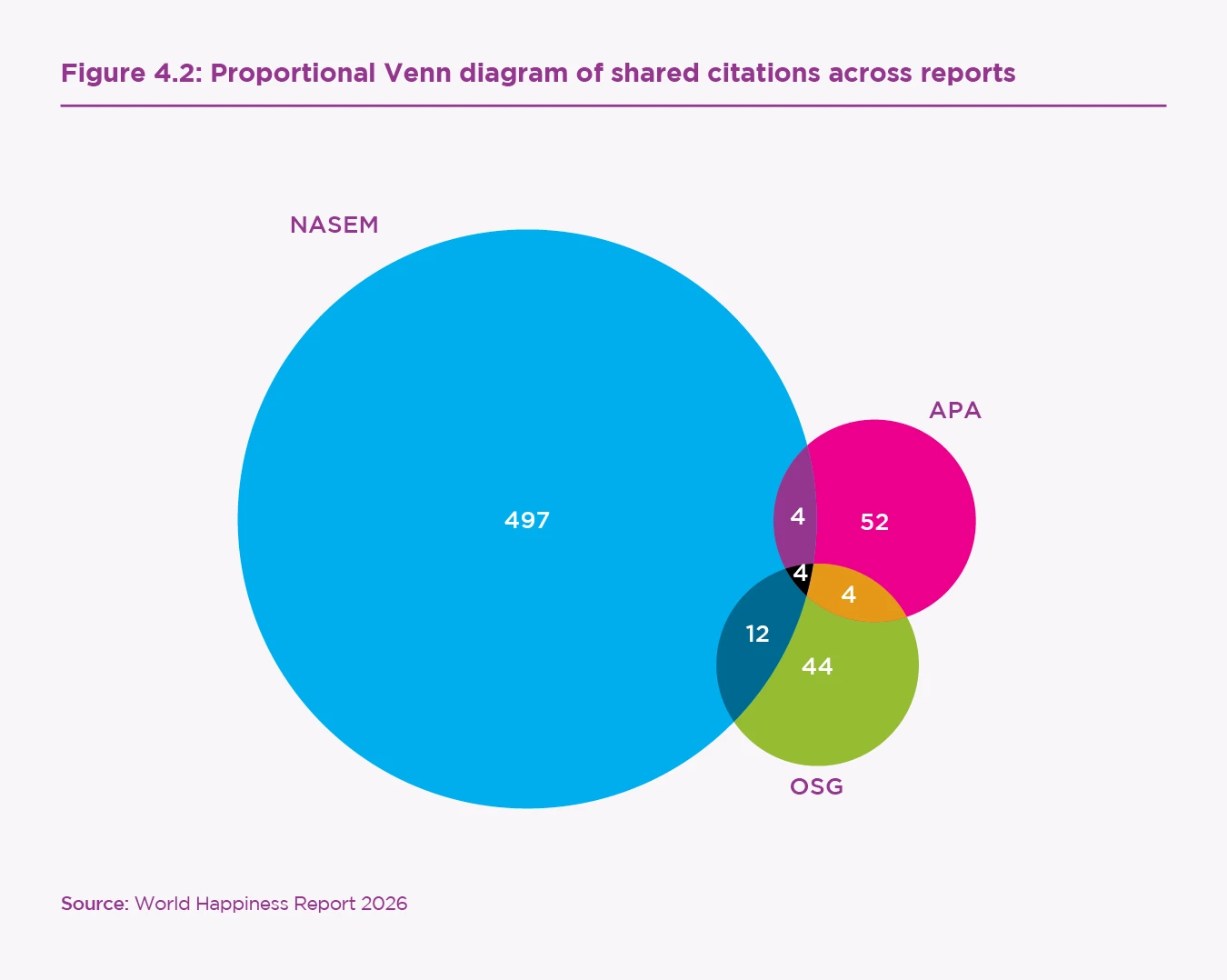

Despite organisations’ broadly similar scientific evidence characteristics, analysis of citation overlap revealed very few shared pieces of literature. Of the 668 total cited journal articles, there were 617 unique works, with 24 articles present in multiple sources. Only four citations appeared across all three documents, representing less than 1% of the total unique academic literature.[65] Pairwise overlaps reveal a further 20 articles found in two of the three reports. Figure 4.2 visualises these shared citation patterns. Online Appendix 4C contains the information of the overlapping articles.

This low overlap raised questions about whether organisations were drawing from different but equally impactful segments of the research landscape, or whether some organisations might be systematically selecting less influential or peripheral studies. Of particular concern was the observation that 20% (k = 17) of the APA report’s academic citations were authored by members of the report’s own advisory panel, suggesting potential bias toward self-citation rather than field-representative selection. The NASEM report also included several studies written by its committee members, but made up considerably less of its overall scientific evidence base (3%, k = 27).

Methodological characteristics

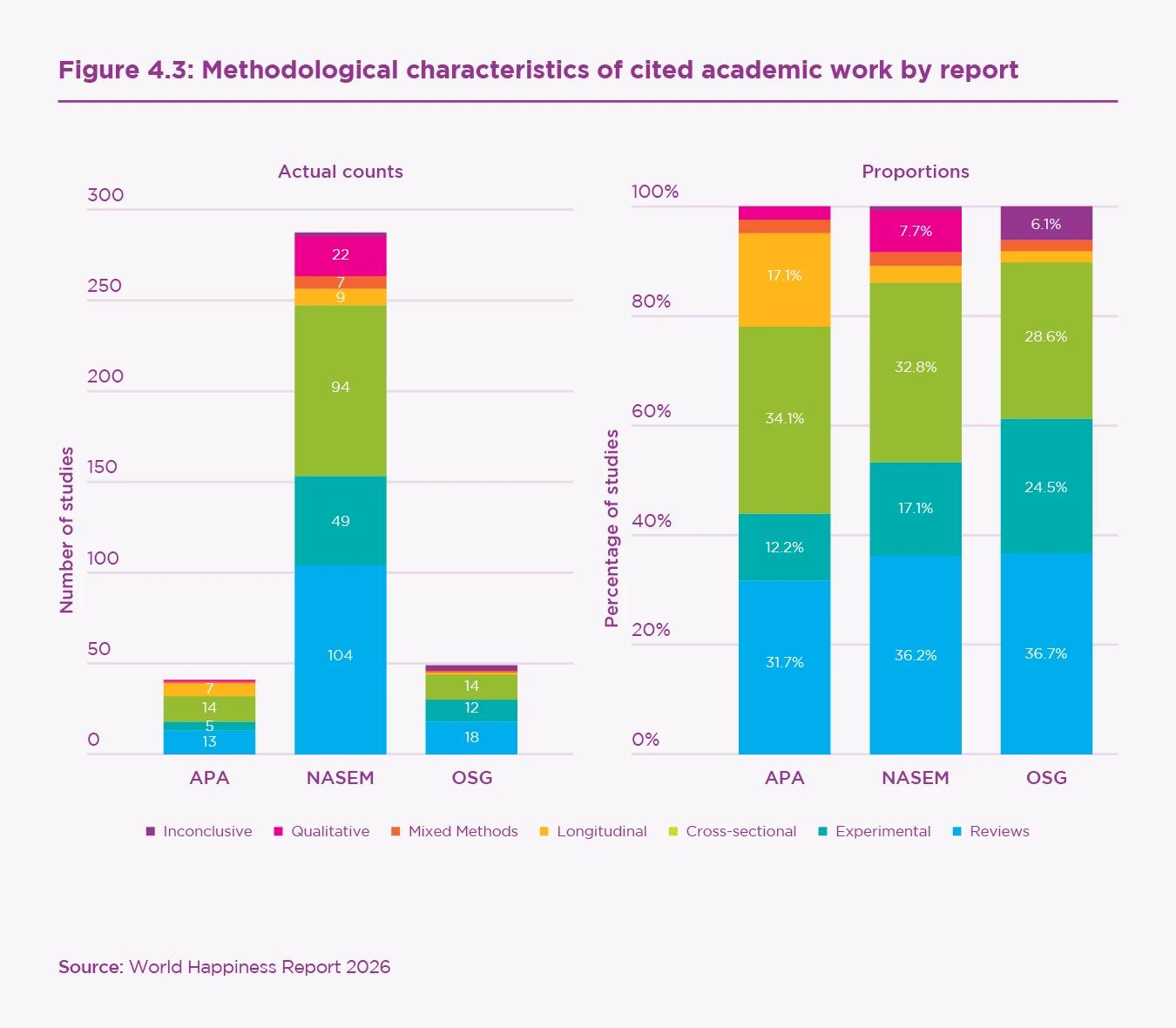

To understand the types of research organisations selected, we systematically categorised all 617 unique peer-reviewed articles. Our analysis focused on the 355 articles whose primary topic was social media and adolescent health, which comprised 53% of the APA reports’ journal articles, 55% of the NASEM report’s, and 77% of the OSG report’s.[66] The academic literature cited across all organisations showed similar methodological distributions. The most frequently cited study types were meta-analyses or systematic reviews (35.8%, k = 127), cross-sectional studies (31.3%, k = 111), experimental studies (17.5%, k = 62), ethnographic or qualitative studies (6.5%, k = 23), and longitudinal designs (4.8%, k = 23). Notably, only 16.6% (k = 59) of cited articles employed methodologies that could plausibly support causal inferences.[67] Table 4.3 shows methodological characteristics by report.[68]

| Study characteristics | APA n (%) | NAS n (%) | OSG n (%) | TOTAL n (%) |

|---|---|---|---|---|

| Methods | ||||

| Meta-analyses, systematic, or narrative reviews | 13 (31.7) | 104 (36.2) | 18 (36.7) | 127 (35.8) |

| Experimental studies | 5 (12.2) | 49 (17.1) | 12 (24.5) | 62 (17.5) |

| Cross-sectional or cohort studies | 14 (34.1) | 94 (32.8) | 14 (28.6) | 111 (31.3) |

| Longitudinal studies | 7 (17.1) | 9 (3.1) | 1 (2.0) | 17 (4.8) |

| Mixed-methods studies | 1 (2.4) | 7 (2.4) | 1 (2.0) | 9 (2.5) |

| Ethnographic or qualitative studies | 1 (2.4) | 22 (7.7) | 0 (0.0) | 23 (6.5) |

| Inconclusive | 0 (0.0) | 2 (0.7) | 3 (6.1) | 6 (1.7) |

| Causality | ||||

| Plausible | 7 (17.1) | 44 (15.3) | 15 (30.6) | 59 (16.6) |

| Unable to be determined by method | 34 (82.9) | 243 (84.7) | 34 (69.4) | 296 (83.4) |

| Inconclusive | 0 (0.0) | 0 (0.0) | 0 (0.0) | 0 (0.0) |

| Total Documents | 41 (100.0) | 287 (100.0) | 49 (100.0) | 355 (100.0) |

Statistical testing revealed no significant organisational differences in methodological distributions after appropriate corrections for multiple comparisons.[69] Figure 4.3 indicates absolute counts and proportions of methodological characteristics in each report’s cited evidence.

Thematic characteristics

Thematic analysis revealed broad similarity in research focus across organisations. Most articles (82%, k = 292) studied general social media use or screen time without specifying platforms, potentially inclusive of TV or gaming. When platforms were specified, Facebook was most common (9%, k = 32), followed by Instagram (3%, k = 12). The most common health outcomes studied were generalised or unspecified mental health (39%, k = 137), depression (15%, k = 53), and body dissatisfaction (6%, k = 21). This is consistent with the historical focus on the negative impacts of media use on mental health, rather than the potential positive outcomes. Approximately 63% (k = 223) focused specifically on adolescent populations. Table 4.4 shows thematic characteristics by report.

| Study characteristics | APA n (%) | NAS n (%) | OSG n (%) | TOTAL n (%) |

|---|---|---|---|---|

| Platforms studied | ||||

| General social media or screen time | 36 (87.8) | 235 (81.9) | 38 (77.6) | 292 (82.3) |

| 0 (0.0) | 26 (9.1) | 8 (16.3) | 32 (9.0) | |

| 3 (7.3) | 9 (3.1) | 1 (2.0) | 12 (3.4) | |

| Combination of platforms | 1 (2.4) | 6 (2.1) | 2 (4.1) | 6 (1.7) |

| TikTok | 1 (2.4) | 3 (1.0) | 0 (0.0) | 4 (1.1) |

| 0 (0.0) | 4 (1.4) | 0 (0.0) | 4 (1.1) | |

| Inconclusive or unspecified | 0 (0.0) | 3 (1.0) | 0 (0.0) | 4 (1.1) |

| Youtube | 0 (0.0) | 1 (0.3) | 0 (0.0) | 1 (0.3) |

| Health outcomes measured | ||||

| Generalised mental health | 15 (36.6) | 106 (36.9) | 24 (49.0) | 137 (38.6) |

| Depression | 2 (4.9) | 28 (9.8) | 7 (14.3) | 53 (14.9) |

| Body dissatisfaction | 2 (4.9) | 17 (5.9) | 3 (6.1) | 21 (5.9) |

| Wellbeing or life satisfaction | 3 (7.3) | 17 (5.9) | 3 (6.1) | 20 (5.6) |

| Sleep outcomes | 2 (4.9) | 13 (4.5) | 0 (0.0) | 15 (4.2) |

| Eating disorders or body dysmorphia | 1 (2.4) | 6 (2.1) | 3 (6.1) | 10 (2.8) |

| Generalised physical health | 1 (2.4) | 7 (2.4) | 1 (2.0) | 8 (2.3) |

| Suicidal ideation, attempts, suicide | 2 (4.9) | 6 (2.1) | 1 (2.0) | 8 (2.3) |

| Isolation and loneliness | 2 (4.9) | 3 (1.0) | 1 (2.0) | 6 (1.7) |

| Child Sexual Abuse | 0 (0.0) | 5 (1.7) | 1 (2.0) | 5 (1.4) |

| Anxiety | 1 (2.4) | 3 (1.0) | 0 (0.0) | 4 (1.1) |

| Drug or alcohol addiction | 1 (2.4) | 2 (0.7) | 1 (2.0) | 4 (1.1) |

| Neurological development | 1 (2.4) | 2 (0.7) | 1 (2.0) | 2 (0.6) |

| Social connections | 0 (0.0) | 1 (0.3) | 0 (0.0) | 1 (0.3) |

Inconclusive or unspecified[70] | 8 (19.5) | 71 (24.7) | 3 (6.1) | 61 (17.2) |

| Focused on adolescent populations | ||||

| Yes | 34 (82.9) | 175 (61.0) | 32 (65.3) | 223 (62.8) |

| No mention | 5 (12.2) | 90 (31.4) | 12 (24.5) | 105 (29.6) |

| Inconclusive | 2 (4.9) | 22 (7.7) | 5 (10.2) | 27 (7.6) |

| Total documents | 41 (100.0) | 287 (100.0) | 49 (100.0) | 355 (100.0) |

Statistical tests revealed no significant organisational differences in platforms studied, health outcomes investigated, or focus on adolescent populations.[71]

Summary

The three reports appeared to select broadly similar types of scientific evidence, but contained little overlap with each other. Citation mapping revealed minimal differences in the methodological and thematic characteristics of cited evidence after robust statistical testing. However, only four publications appeared in all three reports, with a further 19 shared between two reports — comprising less than 1% of all cited literature. This fragmentation might reflect methodological differences, distinct disciplinary foci, or the inherently fragmented nature of a rapidly growing research area.

The patterns identified through this analysis reflect broader methodological and thematic constraints well documented by experts in the social media field, including reliance on correlational methodologies and under-specification of both platforms and outcomes. We found that 75–90% of cited work on social media and mental health did not specify which platforms were studied, while over 50% examined general or unspecified mental health outcomes. Only 17% of cited work employed methodologies that could plausibly support causal inferences. These findings echo calls from field experts for future research to prioritise specificity and avoid treating social media use as homogeneous.

75–90% of cited work on social media and mental health did not specify which platforms were studied, while over 50% examined general or unspecified mental health outcomes.

The “evidence profiles” of each report can be best described as follows: the OSG report citations appear highly focused and selective of influential work, the NASEM report citations demonstrate comprehensive breadth across diverse research areas, while the APA reports’ cited work show lower overall engagement with literature and are highly self-referential. This raises important questions about evidence readiness for strong policy recommendations from this literature, and suggests that divergences emerge instead from how organisations synthesised, contextualised, and communicated similar bodies of research. This constitutes the focus of our subsequent qualitative analysis.

How is evidence synthesised and communicated?

Methods

Having established that these reports selected broadly similar types of research evidence, we now examine how they communicated it differently using qualitative analysis approaches. The analysis operates at two levels: (1) individual evidence use (how specific studies are cited and interpreted) and (2) broader evidence engagement patterns across each report. Building on Elson et al.'s[72] framework identifying common problems in evidence translation, we developed a coding scheme addressing citation accuracy, evidence integration practices, and rhetorical construction of conclusions. Two researchers initially worked collaboratively to establish protocols and refine categories before systematic coding. Following calibration, the primary coder (SLH) completed analysis using NVivo qualitative software, with themes refined through iterative rounds of coding. The complete coding schema, and list of included examples, is given in Online Appendix 4D. Given the qualitative nature of this analysis and substantial variation in document length (APA: 6-11 pages; NASEM: 287 pages; OSG: 25 pages), quantification of observed instances should be interpreted cautiously. Our findings identify practices that appeared more frequently in certain reports, with examples provided to illustrate these patterns, rather than providing a comprehensive catalog of all instances, or a full citation audit. Complete methodological details are available in Online Appendix 4D.

Results

Thematic analysis identified three distinct dimensions along which the reports varied in their evidence translation practices: (1) citation accuracy and contextual detail, (2) engaging with complexity in evidence integration, and (3) calibrating certainty to conclusion strength. These patterns emerged through systematic application of the coding framework to all reports, examining both individual citation fidelity and broader organisational approaches to evidence synthesis. Variations ranged from rigorous scientific communication practices that maintained research fidelity, to concerning patterns that, at times, these authors believe misrepresented scientific evidence.

Some of the most concerning instances involved citations that appeared to be unrelated to the claim they were used to substantiate.

Citation accuracy and detail

Analysis revealed substantial variation in how the reports handled individual citations. Some of the most concerning instances involved citations that appeared to be unrelated to the claim they were used to substantiate. For example, the APA report asserted that “infinite scroll is particularly risky for youth since their ability to monitor and stop engagement on social media is more limited than among adults” (APA 2024, p. 2). The citation supporting this claim investigated factors influencing adolescents’ development of effortful control among Mexican-American teenagers, finding that those who experience more hostility from their parents, live in more violent neighbourhoods, or experience more ethnic discrimination tend to exhibit an exacerbated dip in effortful control in their mid-teen years.[73] While the most generous interpretation might connect this research to claims about adolescent self-regulation abilities, the cited study made no conclusions about social media, infinite scroll features, or comparative abilities between youth and adults. Similarly, the claim that “lack of time limits on social media use” (APA 2024, p. 2) is challenging for youth cites studies investigating laptop distraction in classrooms that did not examine social media or mention time limits.

However, most forms of citation inaccuracies involved subtler misrepresentations of findings. For example, the OSG report claimed that graphic self-harm content “can normalise such behaviors,” (OSG, p. 8) citing research that also found social media platforms “commonly supportive and provided a sense of community among users” offering “suggestions for formal treatment, advice on stopping self-harming behavior, and encouragement.”[74] Presenting only the negative findings while omitting supportive aspects of what is clearly a highly nuanced piece of research reflects an oversimplification of the cited research leaving readers with misleading impressions of research conclusions.

In terms of broader simplification patterns, we found considerable differences in contextual detail given when citing research. The difference between organisational approaches is best exemplified through varying levels of specificity conveying the same piece of evidence. Discussing a large UK study,[75] the NASEM report specified that “the power of social media to influence well-being depended on developmental stage, with girls between ages 11 and 13 and boys between ages 14 and 15 to be in particularly sensitive window” (NASEM, p. 95), compared to the APA report’s less-detailed approach to the same study: “potential risks are likely to be greater in early adolescence” (APA 2023, p. 3), omitting the developmental specificity and gender differences identified in the original research.

A common pattern in simplification involved generalising studies across distinct contexts to make broad claims. For example, the APA report writes “data suggest that youths’ psychological development may benefit from this type of online social interaction [social support and online companionship], particularly during periods of social isolation” (APA 2023, p. 4), citing four studies that investigated: positive and negative online experiences during COVID-19, experiences of Peruvian youth online, TikTok-based treatment adherence for young adults with chronic conditions, and social media self-disclosure among users with Type 1 Diabetes. While individual aspects of these studies support some aspects of the broader claim, this conflation obscures specific findings and inappropriately generalises across distinct contexts to make a broad claim about social support benefits for development.

Engaging with complexity

Moving beyond individual citations, organisations also demonstrated markedly different approaches to synthesising and evaluating the overall evidence base. The NASEM report showed the highest engagement with limitations and contradictory evidence, compared to moderate engagement in the OSG report and the least engagement in APA reports. All reports acknowledged broader constraints in the social media research field, for instance noting that “relatively few studies have been conducted with marginalized populations of youth” (APA 2023, p. 3). However, limitation acknowledgment for individual studies varied substantially. The NASEM report regularly qualified findings: "A 2018 meta-analysis found mobile phone use in the classroom to modestly interfere with student learning and academic performance, although this small effect was driven more by undergraduates than K through 12 students” (p. 101), acknowledging both limited effect size and population specificity affecting generalisation.

Engagement with disconfirmatory evidence also varied considerably. The NASEM report consistently presented contradictory findings even when they complicated organisational positions. After discussing the potential impacts of laptop use in college classes on neighbours learning, the NASEM report noted: “That said, an experiment banning laptops from undergraduate classrooms found no benefit, even a possible detriment to learning (as students in the no-laptop group simply did not come to class)” (p. 101). This demonstrated tolerance for presenting genuinely mixed evidence rather than constructing apparent consensus through selective emphasis. Other reports emphasised supportive evidence while minimising contradictory findings, occasionally acknowledging claims as held by “some researchers” (OSG, p. 9) without fully engaging with contested positions.

Calibrating certainty to conclusion strength

The culmination of these different evidence translation choices shaped how each report navigated communicating scientific uncertainty into policy guidance, which resulted in clear differences between the reports’ overall rhetorical angle and strength in presenting conclusions. Reports varied substantially in language modality signaling confidence levels. Claims that engagement metrics “likely lead to problematic use” (APA 2024, p. 2) was an unsupported claim not linked to cited literature. Vague quantification such as “significant negative effects” or “substantial data” (APA 2024, p. 3) limited readers’ ability to evaluate conclusions independently. Frequent references to undefined “harms” further obscured specific risks under discussion.

These choices culminated in different overall positions. The NASEM report concluded that evidence “did not support the conclusion that social media causes changes in adolescent health at the population level” (p. 94) and noted they “sympathise with some parents’ desire for authoritative prescriptions[…] but [are] mindful of overreaching the data” (p. 120). In contrast, OSG concluded there was insufficient evidence “to conclude that [social media] is sufficiently safe” (p. 11), characterised children as “unknowing participants in a decades-long experiment” (p. 11) and advocated that “we must urgently take action,” (p. 4) concluding that “the evidence noted throughout this Surgeon General’s Advisory necessitates significant concern with the way it is currently designed, deployed, and utilised” (p. 13).

Summary

The aggregation of these practices creates distinct organisational profiles for evidence translation. The NASEM report’s approach — characterised by high limitation acknowledgment, detailed contextual explanations, and consistent engagement with disconfirmatory evidence — reflected extensive methodological transparency. The APA reports’ pattern of citation clumping, minimal limitation acknowledgment, and inappropriate definitiveness at times, suggested prioritisation of clear or actionable guidance over methodological detail. The OSG report demonstrated more moderate limitation acknowledgment, but occasionally gave selective presentation of findings. Together, these dimensions reveal distinct organisational approaches to balancing scientific rigour with public accessibility and policy urgency, at times veering into concerning citation inaccuracies warranting critical attention.

Discussion

Adolescent mental health and social media engagement is an area of active scientific study[76] and it is critical that policymakers benefit from evidence syntheses which present the clearest and highest quality analysis of what is and is not empirically known. To that end, our analysis investigated how three key US professional reports on social media and adolescent mental health used scientific evidence to formulate policy recommendations. We found that divergent policy conclusions did not stem primarily from systematic differences in the types of evidence cited — citation mapping revealed all three reports cited broadly similar types of peer-reviewed research with comparable methodological and thematic characteristics. However, we found considerable heterogeneity in how organisations framed evidence and integrated studies. These findings suggest that synthesis quality among professional organisations warrants greater scrutiny than it typically receives, with implications for evidence-based policy in domains characterised by scientific uncertainty and high public stakes.

Balancing nuance, uncertainty, and utility

The patterns we observed reflect different approaches to handling the challenge of translating complex, uncertain research into clear policy guidance. This translation work involves important trade-offs that organisations must balance between policy utility and scientific specificity.

Evidence synthesis efforts can be understood as existing along a continuum from rigour and comprehensiveness to simplicity and accessibility. Simplified presentations are significantly easier for policymakers and the public to digest and act upon, though as documented throughout this work, this accessibility can sometimes come at the cost of inappropriate overgeneralisations of research findings. NASEM’s comprehensive 287-page report showcases more of a preference for scientific precision, conveying extensive methodological transparency, but also creates substantial barriers to practical engagement given its length. APA and OSG’s briefer formats (6-25 pages) prioritise clear, actionable recommendations but run the risk of oversimplifying a complex and developing research area.

Evidence-based policy reviews take a wide range of forms, serving different audiences and institutional contexts. Strategic simplification is understandable given the practical constraints organisations face: policymakers require guidance they can act upon, public audiences need accessible communication, and lengthy technical reports risk being ignored regardless of scientific merit. Some variation in communication approach therefore reflects legitimate choices about format, audience needs, and the balance between comprehensiveness and utility. In most cases, the variation we observed reflects different organisational tolerance for expressing uncertainty and ambiguity versus preferences for clarity and simplicity in translational choices. This is a hard balance to strike, and much of the variation we documented represents reasonable differences in how organisations navigate these competing demands.

A central aspect of engaging with complexity involves how organisations navigate scientific uncertainty when providing policy guidance. It is important to acknowledge that scientific uncertainty represents intellectual honesty rather than inadequacy. Complex social phenomena — including the relationship between social media use and adolescent wellbeing — often yield findings that are nuanced, context-dependent, and resistant to simple characterisation.[77] When evidence is genuinely mixed or methodologically limited, intellectual honesty about what we do and do not know with confidence serves evidence-based policy better than premature consensus forced through selective emphasis or rhetorical construction of certainty.

This stance does not render scientific evidence unhelpful for policy: policy decisions necessarily proceed despite incomplete information. The question is not whether to act under uncertainty, but how to characterise the evidentiary foundation for different courses of action. Honest synthesis that acknowledges what remains uncertain or contested provides essential information for policy deliberation about appropriate responses under conditions of imperfect knowledge. In contrast, synthesis that obscures complexity or transforms tentative findings into definitive conclusions can misrepresent the state of knowledge and undermine the foundation for informed deliberation. The different approaches to conveying contextual detail and study specifics that we observed reflect varying organisational judgments about how to handle this uncertainty in policy-relevant communication.

However, regardless of format or audience, it is important to promote rigorous quality standards when dealing with complex high-stakes evidence. The differences we observed, particularly in terms of citation accuracy and contextualisation, affect whether readers can independently assess the evidentiary basis for recommendations or must accept claims on organisational authority alone. Our findings suggest considerable variation in how different organisations conducted this synthesis work. Given that all three organisations examined here are well-resourced, scientifically oriented, and operate with explicit mandates to provide authoritative evidence synthesis, it is perhaps reasonable to expect greater consistency in the quality and fidelity of scientific communication. This is important research addressing complex policy questions that affect vulnerable populations, where quality ought to be consistently high.

Some of our observations here are particularly worrying, even if rare. Inaccurate citations where claims are not supported by referenced evidence cannot be justified as a legitimate communication choice. Nor can selectively omitting contradictory findings or major limitations that would fundamentally alter interpretation of research conclusions. Such practices are better characterised as using evidence as “ammunition”, a phrase termed by Weiss which describes invoking the authority of science to legitimise conclusions without substantively engaging with their complexity.[78] This is distinguished from genuinely rigorous evidence-based syntheses, which require maintaining fidelity to source material, honest acknowledgment of uncertainty, and thoughtful treatment of contradictory findings.

Researchers and other end users should have more or less confidence in these reports based on whether they demonstrate citation accuracy, balanced presentation of evidence, and appropriate qualification of conclusions.

When we systematically compared these reports, differences in translation quality became evident. It is clear that researchers and other end users should have more or less confidence in these reports based on whether they demonstrate citation accuracy, balanced presentation of evidence, and appropriate qualification of conclusions. All reports have their limitations, but for high-stakes research in domains affecting adolescent wellbeing, the standards ought to be consistently higher. The substantial variation in approaches to balancing nuance and practical utility suggests that greater care should be taken when translating scientific evidence into policy recommendations. This is particularly important in contested domains characterised by methodological limitations and genuine scientific uncertainty, as is the case for social media and adolescent mental health.

Implications

Effective evidence-based policy development requires clear and accurate information on what science can and cannot tell us about key questions of societal importance. Indeed, scientific evidence represents one input among many in democratic policy deliberations. The knowledge we derive from the scientific method can only be as strong as an honest reading of the evidence we have.[79] It will be weighed alongside political considerations, stakeholder perspectives, and value judgments about social priorities.

Low-quality evidence synthesis carries material costs for policymaking. If evidence is mischaracterised or selectively presented, policies may be poorly calibrated to what is scientifically known. For example, some experts have warned that strict age-based access restrictions for social media use risk disadvantaging young people who benefit from online connection while failing to address actual mechanisms of potential harm.[80] If syntheses misrepresent the state of knowledge, even unintentionally, the resulting policies may target the wrong outcomes or lack strong empirical foundation for their likely effectiveness.

Beyond immediate policy impacts, synthesis quality matters for maintaining public confidence in scientific institutions and the broader credibility of evidence-informed policymaking. When synthesis processes are revealed to mischaracterise research findings — such as through citation inaccuracies, selective omission of contradictory evidence, or inappropriate certainty about contested findings — this can affect public perception of scientific reliability more broadly.[81][82]

Many types of input inform democratic policymaking, including advocacy, anecdote, and values-based arguments about social priorities.[83] In this chapter, we have focused specifically on scientific research evidence as it is translated into policy guidance. As scientists committed to rigorous, transparent, and replicable approaches to understanding complex phenomena, we have a responsibility to consistently uphold standards that justify claims to scientific authority and to identify opportunities for improving practices within our community.

Good governance of evidence

The value of high-quality evidence synthesis has been recognised in many domains. Indeed, scholars have increasingly emphasised the need for improved rigour in primary social and psychological sciences through reproducibility, transparency, and robust methodological practices.[84] Different fields have developed distinct approaches to evaluating evidence quality: formal protocols for systematic reviews and meta-analyses exist (such as PRISMA and Cochrane guidelines), while medicine employs established hierarchies for assessing evidence strength. Social sciences face different methodological constraints, prompting calls for field-appropriate frameworks such as “evidence-readiness levels” that consider replication status and theoretical grounding alongside study design.[85] However, as our findings highlight, selecting high-quality evidence is only part of the challenge. As Parkhurst notes, “good evidence” must be paired with the “good governance of evidence”.[86] Some domains have developed best practices guidance for authoritative bodies conducting science syntheses.[87]

Our analysis identified six practices which, where present, indicated good governance of the scientific literature.

First, citation accuracy and fidelity, the clear correspondence between specific claims and the cited studies, was the most fundamental standard of good evidence governance. Less rigorous synthesis contained some citation inaccuracies, or subtler misrepresentations of evidence. Even though strictly inaccurate citations were rare, their presence at all is worrying. Better reports avoided using evidence as “ammunition” and engaged precisely with every piece of cited literature.

Secondly, strong synthesis provided suitable contextualisation about cited studies including information about methodology, sample characteristics, and specific findings. Less detailed work cited multiple studies relating to a single claim without substantive discussion of their content, seeming to use citations decoratively instead of supporting a specific point of knowledge. At times, research was generalised very broadly across diverse national, cultural, ethnic, or other contexts. Better instances of evidence integration were highly specific about the contexts in which the studies were conducted, enabling an accurate representation of the underlying research.

Thirdly, good governance was characterised by systematic limitation acknowledgement where such limitations impact how a study, or a field of studies, might be interpreted including practical effect sizes, constraints on generalisability, and methodological constraints. Reports which convey field-wide limitations can help their readers qualify the findings of individual studies and gain a more accurate picture of the state of scientific knowledge as it grows and develops over time.

Fourthly, reports which contained engagement with disconfirmatory evidence demonstrated tolerance for presenting contradictory findings enacted better evidence governance than those which sought to construct apparent consensus through selective emphasis. When research yielded mixed results, rigorous work acknowledged this complexity rather than highlighting only supportive evidence.

Fifthly, the best syntheses used language appropriately calibrated to confidence in the underlying research. Less strong approaches demonstrated inappropriate definitiveness, and used vague terms such as “significant effects” or discussed unspecified “harms”. In general, better reports erred on the more cautious end of the spectrum, demonstrating intellectual humility and avoiding certain statements.

Finally, high-quality reviews documented how evidence was identified, selected, and evaluated, displaying process transparency including committee composition, funding sources, any conflicts of interest, and the scope of literature reviewed. It is important for readers to be able to understand the intended aims and goals of the organisations, such that they might be able to critically calibrate their level of trust in these types of reports.

Table 4.5 summarises these practices and tentatively suggests some practical ways in which they might be better achieved. Some of these approaches are more feasible and simple, whereas others are more of an ideal requiring some level of systemic change.

| Practice observed | Indicator of quality | Possible implementation approaches |

|---|---|---|

| Citation accuracy and fidelity | Clear correspondence between specific claims and cited studies; absence of subtle misrepresentation | Dedicated verification staff (cf. legal scholarship)*; external citation audits; citation styles requiring authors to specify how sources support claims** |

| Suitable contextualisation | Substantive discussion of methodology, sample characteristics, and specific findings for cited studies; citations used to support specific knowledge claims rather than decoratively | Structured presentation moving from most to least relevant context (e.g., national context first, then international evidence)*; required methodological summaries for key cited studies** |

| Systematic limitation acknowledgement | Explicit discussion of practical effect sizes, generalisability constraints, and methodological limitations that affect interpretation | Standardised limitation reporting requirements*; consideration of publication bias analogous to systematic review protocols** |

| Engagement with disconfirmatory evidence | Acknowledgment of mixed findings and contradictory evidence; tolerance for complexity rather than construction of apparent consensus | Explicit statement of what evidence would change authors' conclusions*; adversarial collaboration or intentional recruitment of authors with opposing views** |

| Language is appropriately calibrated to confidence | Precision in communicating uncertain findings; avoidance of inappropriate definitiveness and vague terms like "significant effects" | Editorial review specifically for overclaiming*; standardised confidence language (cf. IPCC uncertainty guidance)** |

| Process transparency | Documentation of how evidence was identified, selected, and evaluated; disclosure of committee composition, conflicts of interest, and scope | Development of reporting checklists for reports analogous to Cochrane protocols or PRISMA*; public pre-registration of synthesis scope and methods** |

| * Lower-resource approaches ** More resource-intensive or systemic changes | ||

These six practices are consistent with those proposed by other scholars almost a decade ago who recommended that professional organisations acknowledge disconfirmatory data, focus on effect sizes, acknowledge methodological limitations, solicit balanced views, avoid secondary sources, distinguish scientific statements from advocacy statements, be mindful of unintended harms, and prioritise open science practices.[88] The persistence of these issues, documented first by Elson and colleagues, suggests that translation quality in policy statements still requires attention.

Limitations and future directions

This chapter would be remiss to neglect thorough discussion of our own limitations.[89] Further methodological particulars are available in the online appendices.

Scope constraints

Our analysis examined the reports of three US-based organisations during a specific period (2023–2024) when social media regulation received heightened political attention, potentially creating unique pressures that may not apply during routine policy development. Findings may not generalise to non-US organisations, other types of scientific advisory bodies, or policy domains with different characteristics. The focus on total cited evidence, rather than distinguishing which citations directly supported organisational arguments versus those cited for critique or context, represents another constraint. This approach cannot distinguish between confirmatory and disconfirmatory treatment of evidence (e.g., a report might include studies primarily to critique them, affecting quality metrics in ways that do not reflect actual selection patterns.)

Further, we did not systematically examine how organisations reasoned from evidence to specific policy recommendations. In other words, we did not judge whether proposed interventions were themselves supported by empirical evidence. Identifying that a problem exists — whether rightly or wrongly — does not necessitate or justify any particular policy action. Intervention science examines such questions systematically, comparing policy approaches and their likely effects. Future research examining this dimension would provide important insights into the complete evidence-to-policy chain, evaluating not only how organisations synthesised evidence about problems but also how they justified specific interventions as appropriate responses.

Methodological limitations

Our classification approach for coding evidence characteristics, despite achieving high accuracy in validation testing overall, produced a number of “inconclusive” classifications that restricted statistical analysis precision for the “effects” category.[90] Future studies might employ hybrid approaches combining automated classification with comprehensive human verification to improve reliability. More fundamentally, document-based analysis cannot access deliberative processes such as committee discussions, internal organisational pressures, or external political factors that may have significantly influenced synthesis choices but remain inaccessible through examining final outputs alone.

Our qualitative analysis reflects analytical decisions about how to characterise evidence translation practices. As widely noted, qualitative research is poorly suited to direct replication efforts, but nonetheless our reporting aims to promote systematic transparency in our analytical choices.[91] Different researchers might construct alternative categorisation schemes revealing different patterns. Our qualitative analysis is not a comprehensive catalog of every instance a theme was identified and is not intended as a complete citation audit. Resultingly, there may be other instances of each category within the reports that other researchers might identify.

Additionally, resource constraints limited full comparative analysis to a single coder following collaborative protocol development. We welcome future research appraising quality patterns in evidence use for professional science organisations reviewing social media and mental health research, whether using our framework or developing alternative approaches (see Online Appendix 4D for more detailed qualitative methodology).

Future directions

These limitations suggest several promising research directions. First, investigating the deliberative processes through ethnographic observation of evidence synthesis committees or interviews with committee members could reveal how organisational context, political pressures, and interpersonal dynamics shape synthesis choices in ways not visible in final documents. Second, examining how different stakeholders, including policymakers, practitioners, advocacy groups, and journalists, actually use and interpret translated evidence in policy processes would illuminate how much the synthesis quality differences we identified matter for downstream policy development and public understanding. Third, systematically comparing evidence bases invoked to support specific policy interventions would extend our focus beyond problem characterisation to intervention justification. Fourth, applying similar analytical frameworks to other policy domains (climate science, public health, education policy) would assess whether patterns we identified in social media and adolescent mental health research reflect broader challenges in evidence synthesis or domain-specific issues. Additionally, longitudinal analysis tracking how synthesis practices evolve as research fields mature and evidence accumulates could identify factors that promote improvement in synthesis quality over time. Finally, it would be beneficial to track whether differences in synthesis report styles or qualities predict uptake by government or policy leaders. This might enable a better understanding of whether highly detailed and balanced reports are leveraged by policymakers more or less than shorter, simplified, or perhaps over-confident, reports.

Many of the practices we identified, like fidelity to source material, precision in citations, and intellectual humility in the face of uncertainty, distinguish scientific synthesis from other aspects of policymaking such as lobbying and advocacy.

Conclusions

Making the best use of scientific knowledge to inform effective policy is challenging. Today, the question of social media engagement and adolescent mental health is framed mainly in terms of producing high-quality original research,[92] but less emphasis has been placed on how to rigorously translate this literature into coherent policy recommendations. Our study represents a step towards developing higher standards for this process. We demonstrated there are considerable qualitative differences in how well organisations synthesise and communicate evidence. Indeed, many of the practices we identified, like fidelity to source material, precision in citations, and intellectual humility in the face of uncertainty, distinguish scientific synthesis from other aspects of policymaking such as lobbying and advocacy. If history is any guide, today’s focus on young people and social media will, in time, give way to new hopes and concerns about augmented reality and artificial intelligence. It is our sincere hope that when this time comes, those producing and using scientific research will understand that taking these questions of societal importance seriously requires equal rigour in the interpretation and communication of our science as it does in its creation.

References

Adjei, N. K., Schlüter, D. K., Straatmann, V. S., Melis, G., Fleming, K. M., McGovern, R., Howard, L. M., Kaner, E., Wolfe, I., & Taylor-Robinson, D. C. (2021). Impact of poverty and family adversity on adolescent health: A multi-trajectory analysis using the UK Millennium Cohort Study. The Lancet Regional Health - Europe, 13, 100279. https://doi.org/10.1016/j.lanepe.2021.100279

Alonzo, R., Hussain, J., Stranges, S., & Anderson, K. K. (2021). Interplay between social media use, sleep quality, and mental health in youth: A systematic review. Sleep Medicine Reviews, 56, 101414. https://doi.org/10.1016/j.smrv.2020.101414

Anvari, F., & Lakens, D. (2018). The replicability crisis and public trust in psychological science. Comprehensive Results in Social Psychology, 3(3), 266–286. https://doi.org/10.1080/23743603.2019.1684822

APA (American Psychiatric Association). (2021). APA guidelines on evidence-based psychological practice in health care. https://www.apa.org/about/policy/psychological-practice-health-care.pdf

APA (American Psychiatric Association). (2023). Health advisory on social media use in adolescence. https://www.apa.org/topics/social-media-internet/health-advisory-adolescent-social-media-use.pdf

APA (American Psychiatric Association). (2024). Potential risks of content, features, and functions: The science of how social media affects youth. https://www.apa.org/topics/social-media-internet/youth-social-media-2024

Appel, M., Marker, C., & Gnambs, T. (2020). Are social media ruining our lives? A review of meta-analytic evidence. Review of General Psychology, 24(1), 60–74. https://doi.org/10.1177/1089268019880891

Atherton, O. E., Lawson, K. M., & Robins, R. W. (2020). The development of effortful control from late childhood to young adulthood. Journal of Personality and Social Psychology, 119(2), 417–456. https://doi.org/10.1037/pspp0000283

Bastow, S., Dunleavy, P., & Tinkler, J. (2014). Social Science for a Digital Era. In The Impact of the Social Sciences: How Academics and Their Research Make a Difference (pp. 271–294). SAGE Publications Ltd. https://doi.org/10.4135/9781473921511

Bogenschneider, K., & Corbett, T. (2021). Evidence-Based Policymaking: Envisioning a New Era of Theory, Research, and Practice (2nd ed.). Routledge. https://doi.org/10.4324/9781003057666

Boniel-Nissim, M., Marino, C., Galeotti, T., Blinka, L., Ozoliņa, K., Craig, W., Lahti, H., Wong, S. L., Brown, J., Wilson, M., & Inchley, J. (2024). A focus on adolescent social media use and gaming in Europe, central Asia and Canada. World Health Organisation. https://iris.who.int/handle/10665/378982

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology. https://www.tandfonline.com/doi/abs/10.1191/1478088706qp063oa

Cairney, P. (2016). The Politics of Evidence-Based Policy Making. Palgrave Macmillan UK. https://doi.org/10.1057/978-1-137-51781-4

Cairney, P. (2022). The myth of ‘evidence-based policymaking’ in a decentred state. Public Policy and Administration, 37(1), 46–66. https://doi.org/10.1177/0952076720905016

Cairney, P., & Oliver, K. (2017). Evidence-based policymaking is not like evidence-based medicine, so how far should you go to bridge the divide between evidence and policy? Health Research Policy and Systems, 15(1), 35. https://doi.org/10.1186/s12961-017-0192-x

Calvin, K., Dasgupta, D., Krinner, G., Mukherji, A., Thorne, P. W., Trisos, C., Romero, J., Aldunce, P., Barrett, K., Blanco, G., Cheung, W. W. L., Connors, S., Denton, F., Diongue-Niang, A., Dodman, D., Garschagen, M., Geden, O., Hayward, B., Jones, C., … Péan, C. (2023). IPCC, 2023: Climate Change 2023: Synthesis Report. Contribution of Working Groups I, II and III to the Sixth Assessment Report of the Intergovernmental Panel on Climate Change [Core Writing Team, H. Lee and J. Romero (eds.)]. IPCC, Geneva, Switzerland. (First). Intergovernmental Panel on Climate Change (IPCC). https://doi.org/10.59327/IPCC/AR6-9789291691647

Clarke, B., Alley, L. J., Ghai, S., Flake, J. K., Rohrer, J. M., Simmons, J. P., Schiavone, S. R., & Vazire, S. (2024). Looking our limitations in the eye: A call for more thorough and honest reporting of study limitations. Social and Personality Psychology Compass, 18(7), e12979. https://doi.org/10.1111/spc3.12979

Cologna, V., Mede, N. G., Berger, S., Besley, J., Brick, C., Joubert, M., Maibach, E. W., Mihelj, S., Oreskes, N., Schäfer, M. S., van der Linden, S., Abdul Aziz, N. I., Abdulsalam, S., Shamsi, N. A., Aczel, B., Adinugroho, I., Alabrese, E., Aldoh, A., Alfano, M., … Zwaan, R. A. (2025). Trust in scientists and their role in society across 68 countries. Nature Human Behaviour, 9(4), 713–730. https://doi.org/10.1038/s41562-024-02090-5

Coyne, P., Voth, J., & Woodruff, S. J. (2023). A comparison of self-report and objective measurements of smartphone and social media usage. Telematics and Informatics Reports, 10, 100061. https://doi.org/10.1016/j.teler.2023.100061

Davies, H. T. O., Nutley, S. M., & Smith, P. C. (Eds.). (2000). What works?: Evidence-based policy and practice in public services (1st ed.). Bristol University Press. https://doi.org/10.2307/j.ctt1t892t3

Dunnwald, M., DeLeon, V. B., & Burrows, A. M. (2025). The importance of science communication and public engagement to professional associations. Anatomical Sciences Education, 18(12), 1440–1446. https://doi.org/10.1002/ase.70017

Dyson, M. P., Hartling, L., Shulhan, J., Chisholm, A., Milne, A., Sundar, P., Scott, S. D., & Newton, A. S. (2016). A systematic review of social media use to discuss and view deliberate self-harm acts. PLOS ONE, 11(5), e0155813. https://doi.org/10.1371/journal.pone.0155813

Elson, M., Ferguson, C. J., Gregerson, M., Hogg, J. L., Ivory, J., Klisanin, D., Markey, P. M., Nichols, D., Siddiqui, S., Wilson, J., & (Division 46 News Media, Public Education and Public Policy Committee). (2019). Do Policy Statements on Media Effects Faithfully Represent the Science? Advances in Methods and Practices in Psychological Science, 2(1), 12–25. https://doi.org/10.1177/2515245918811301

European Commission. (2024). Living guidelines on the responsible use of generative AI in research. EU. https://research-and-innovation.ec.europa.eu/document/2b6cf7e5-36ac-41cb-aab5-0d32050143dc_en

Feng, G. C. (2024). Best practices for responsibly using AI tools in social sciences research. Cogent Social Sciences, 10(1), 2420484. https://doi.org/10.1080/23311886.2024.2420484

Ferguson, C. J. (2020). Aggressive video games research emerges from its replication crisis (Sort of). Current Opinion in Psychology, 36, 1–6. https://doi.org/10.1016/j.copsyc.2020.01.002

Ferguson, C. J., Kaye, L. K., Branley-Bell, D., & Markey, P. (2025). There is no evidence that time spent on social media is correlated with adolescent mental health problems: Findings from a meta-analysis. Professional Psychology: Research and Practice, 56(1), 73–83. https://doi.org/10.1037/pro0000589

Foulkes, L., & Andrews, J. L. (2023). Are mental health awareness efforts contributing to the rise in reported mental health problems? A call to test the prevalence inflation hypothesis. New Ideas in Psychology, 69, 101010. https://doi.org/10.1016/j.newideapsych.2023.101010